Introduction: The Redefinition of the Modern Battlespace

In the contemporary strategic environment, the fundamental nature of conflict has transcended physical geography, repositioning the human mind as both the primary weapon and the ultimate strategic objective. This paradigm shift is encapsulated in the concept of “Cognitive Warfare,” a domain where military and non-military activities are synchronized to gain, maintain, and protect a cognitive advantage over adversaries.1 Unlike traditional psychological operations (PSYOPs), which are often tactical, localized, and constrained by discrete campaign objectives, cognitive warfare represents an overarching, persistent effort to fracture societal cohesion, weaponize identity, and engineer epistemic chaos on a population scale.2 The strategic goal is not merely to deceive, but to fundamentally attack and degrade rationality, leading to the systemic weakening of adversarial institutions and the exploitation of inherent vulnerabilities.1

The convergence of artificial intelligence (AI), neurotechnology, and digital communications has created an ecosystem where influence can be scaled with unprecedented precision.2 Cognitive warfare operates continuously below the threshold of armed conflict, blending strategic competition with hybrid pressure to shape the conditions under which human beings form beliefs, allocate attention, and generate strategic intent.2 In this battlespace, the measure of effectiveness has shifted from short-term message penetration to durable, long-term changes in cognitive patterns, behavioral dispositions, and the willingness of a society to support military or political action.2

The Russian Federation, viewing cognitive warfare as a central pillar of statecraft, governance, and military strategy, has heavily invested in operations designed to alter the decision-making processes of Western civilian populations and political leaders.3 By exploiting the very architecture of human cognition, the Kremlin seeks to secure strategic objectives without the requisite military effort that traditional kinetic warfare demands.4 This exhaustive report investigates the theoretical foundations and operational mechanics of the Kremlin’s narrative engineering—specifically its “firehose of falsehood” and “ecosystem-speed” tactics. Furthermore, it systematically analyzes how Western intelligence, military initiatives, and open-source intelligence (OSINT) networks are deploying advanced AI and sentiment analysis to counter these multi-domain threats, while exploring the critical necessity of “strategic empathy” in deciphering adversary intent to prevent inadvertent geopolitical escalation.

1. The Theoretical and Strategic Foundations of Cognitive Warfare

To fully grasp the threat vector posed by adversarial information operations, it is necessary to establish the formal parameters of cognitive warfare. As articulated by the North Atlantic Treaty Organization (NATO) Allied Command Transformation (ACT), cognitive warfare is not merely the means by which modern actors fight; it is the fight itself.1 Western theorists and military scientists have increasingly recognized that the decisive terrain of the 21st century is behavior-centric.2

1.1 Expanding the Definition Beyond Psychological Operations

Historically, PSYOPs relied on the broadcast of tailored messages to target audiences to influence their emotions, motives, and objective reasoning. However, as noted in the NATO Chief Scientist’s 2025 Report on Cognitive Warfare, the contemporary discipline is substantially more expansive.2 A revised and highly precise definition characterizes cognitive warfare as the application of information and cognitive sciences to enhance or degrade the decision-making processes of political leaders, military commanders, and civilian societies, ultimately securing a positional advantage in the information environment.3

This definition highlights a critical continuum: the offense/defense and enhancement/degradation dichotomy. Unlike discrete cyber attacks or kinetic strikes, cognitive warfare relies on persistence, repetition, and cumulative effects that shape human beliefs gradually over extended temporal horizons.7 This temporal dimension complicates detection and assessment, rendering traditional intelligence metrics inadequate.7 Consequently, cognitive warfare must be evaluated through decision-centric outcomes, measuring whether exposure translates into measurable changes in decision quality, speed, public trust, and civic behavior under contested conditions.2

1.2 The Convergence of Neuro-Science, Technology, and AI (NeuroS/T)

The threat landscape is exponentially magnified by the integration of emerging technologies. The convergence of neuro-science and technology (NeuroS/T) with AI enables precision influence at scale through the biological, psychological, and socially mediated modulation of human emotion and behavior.2 Adversaries view the human brain as an operational domain, envisioning an integrated system where humans are cognitively influenced by information technology systems.8

The battlespace is thus continuous, operating non-kinetically and blending strategic competition with wartime maneuvering.2 The target set has expanded dramatically from discrete military platforms to encompass entire human cognitive and social systems, attacking trust networks, identity narratives, and the foundational legitimacy of democratic institutions.2 In this environment, the objective is to create “epistemic chaos”—a state where the target population is no longer capable of distinguishing truth from falsehood, thereby inducing societal paralysis and neutralizing the target nation’s ability to project power or resist coercion.2

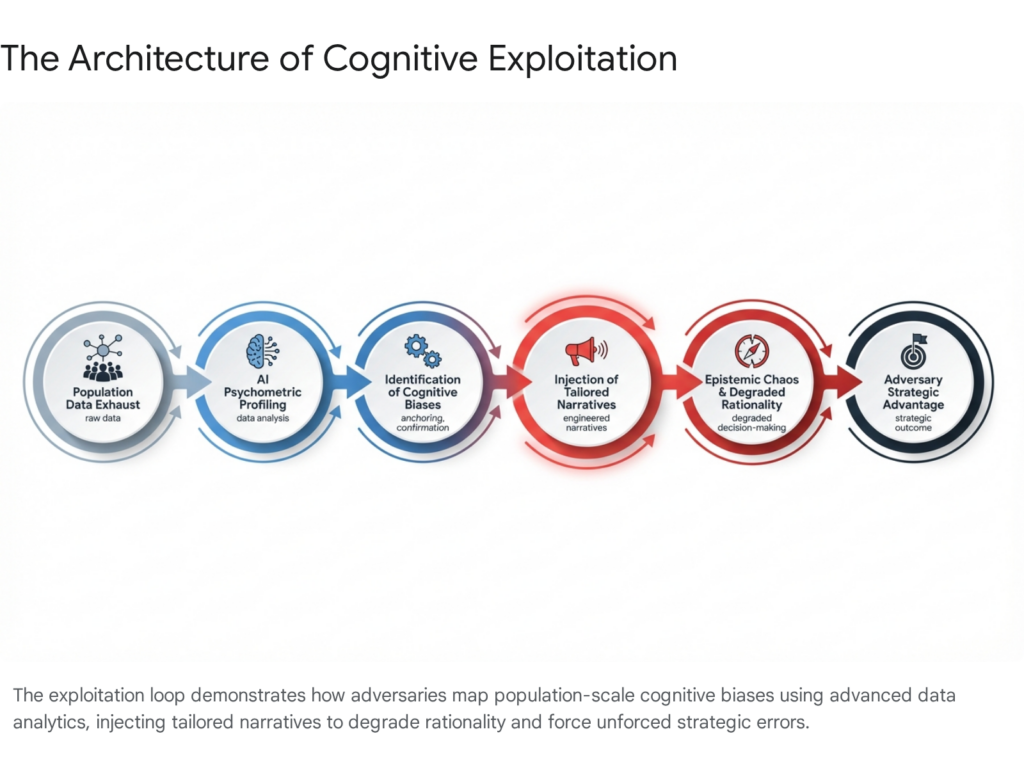

2. The Architecture of Exploitation: Mapping and Weaponizing Cognitive Blind Spots

To effectively manipulate a target population, an adversary must first understand and map the structural vulnerabilities inherent in human cognition. The human brain is optimized for rapid decision-making in survival situations and relies heavily on heuristics—mental shortcuts that produce systematic cognitive biases. In the context of cognitive warfare, these biases are operationalized as exploitable terrain.9

2.1 The Psychometric Profiling of Vulnerability and Social Physics

The weaponization of cognitive blind spots begins with the population-scale mapping of psychological vulnerabilities. The fragmented state of social bias research has historically created systematic blind spots within public discourse, leaving populations aware of individual biases but entirely oblivious to the groupthink, polarization dynamics, and information cascades that shape collective behavior.10 Adversaries leverage this asymmetry. By deploying predictive AI algorithms and analyzing vast troves of digital exhaust—social media interactions, geolocated movements, and consumption patterns—hostile actors conduct psychometric profiling at an unprecedented scale.10

This capability allows adversaries to construct rich mental models of target populations, echoing the academic discipline of “Social Physics” pioneered at institutions like MIT.12 Social physics posits that social learning and peer behavior are the dominant mechanisms of human behavior change, utilizing big data and real-time audio-visual monitoring to track the spread of ideas through human networks.12 Rather than treating populations as monolithic entities, cognitive warfare campaigns segment audiences based on their susceptibility to specific cognitive triggers. Advanced AI systems process these models to infer mental states, predict future actions, and offer context-aware informational stimuli designed to provoke desired emotional responses.14

The implications for military personnel are severe. In a theoretical but highly plausible operational scenario outlined by military researchers, AI-driven cognitive threat systems can analyze the social media history of a specific warfighter, identify deep-seated psychological vulnerabilities (such as impulsivity or marital insecurity), and deliver highly targeted, fabricated media—such as deepfakes denoting infidelity—to neutralize that individual through induced emotional trauma or irrational, violent action.8 This demonstrates how cognitive warfare achieves spectacular tactical successes at negligible costs by weaponizing highly personalized cognitive data.8

2.2 Operationalizing Specific Cognitive Biases

The tactical implementation of cognitive warfare relies on the systematic exploitation of specific, well-documented biases. Autonomous systems and digital algorithms operating in high-dimensional environments frequently rely on prioritization heuristics to allocate attention, which inadvertently introduces cognitive biases such as salience, spatial framing, and temporal distortion.15 Adversaries actively exploit these:

- Anchoring: This principle dictates that human decision-making is heavily influenced by the first piece of information encountered.16 In information warfare, an adversary will rapidly inject a fabricated narrative into the information environment immediately following a crisis.16 Even when subsequent, meticulously fact-checked information is released, the target audience’s perception remains “anchored” to the initial falsehood, forcing defenders into a perpetually reactive posture.

- Confirmation Bias: Individuals inherently favor information that confirms their pre-existing beliefs while disregarding contradictory evidence.9 State-sponsored disinformation networks construct echo chambers that feed highly personalized, polarizing content to specific demographics, effectively weaponizing identity and exacerbating societal fault lines to fracture national cohesion.2

- Availability Heuristic and Salience: Humans judge the probability of events by how easily examples come to mind. By flooding the information zone with highly emotive, salient imagery—such as exaggerated threats of economic collapse, manufactured civil unrest, or cultural decay—adversaries artificially inflate the perceived likelihood of these events, driving populations toward reactionary, fear-based political decisions.15

The military and national security apparatus has increasingly recognized these vulnerabilities. Current research initiatives, such as those funded by defense agencies, are focused on mapping the specific biases of military leadership to identify “blocking biases” and “problem biases” that could paralyze command and control under the extreme stress of cognitive warfare.17 Overcoming these vulnerabilities requires whole-of-force resiliency efforts, immersive environmental training using psychophysiological monitoring, and the reinforcement of metacognition—the ability of an individual to actively monitor and regulate their own cognitive processes under multiform constraints.17

3. The Mechanics of Kremlin Narrative Engineering

The Russian Federation’s approach to information operations is heavily rooted in historical Soviet doctrines of Maskirovka (military deception) and reflexive control, fundamentally modernized for the digital age.19 The objective is not necessarily to persuade the adversary of a specific Russian truth, but rather to corrupt the concept of truth entirely, eroding national legitimacy, and sowing pervasive doubt regarding the integrity of democratic systems.18

3.1 The “Firehose of Falsehood”

The contemporary Kremlin propaganda model is most accurately described by intelligence analysts as a “firehose of falsehood.” This strategy is characterized by two defining features: the deployment of a massive number of channels and messages, and a shameless, inherent willingness to disseminate partial truths, contradictions, or outright fictions.21 Russian news networks such as RT and Sputnik, alongside state-sponsored online portals and vast ecosystems of alt-media, purposefully blend infotainment with disinformation, packaging deception in formats that mimic the appearance of proper journalistic news programs.22

The psychological efficacy of the firehose model relies entirely on volume and repetition. The human brain naturally equates repetition with credibility. As individuals are repeatedly exposed to a specific narrative—even if they initially recognize and reject it as false—the sheer volume of the messaging slowly degrades their cognitive resistance.7 Over time, people forget the source of the information or the fact that they previously rejected it, leading to a gradual, unconscious acceptance of the falsehood.21 Furthermore, the strategy intentionally floods the information space with contradictory claims; for instance, framing a global crisis as a manufactured hoax while simultaneously attributing it to a hostile biological weapon.25 This flood of contradictions promotes confusion, hysteria, and epistemic chaos, ensuring that audiences become overwhelmed, cynical, and ultimately disengage from civic participation altogether.2 Support for these false narratives across European societies has historically reached alarming levels, with empirical surveys indicating acceptance by up to one-third of certain populations.23

3.2 Ecosystem-Speed Narrative Warfare and Core Templates

To maintain the necessary volume and velocity of the firehose, the Kremlin employs “ecosystem-speed” narrative warfare.25 This involves an extensive, well-resourced, and highly coordinated digital infrastructure comprising state actors, oligarch-owned media holdings, and decentralized non-state proxies.24 When a global event occurs, this ecosystem does not wait to conduct factual analysis. Instead, it utilizes automation and established informational pathways to rapidly shape and disseminate a message that resonates with target audiences.25 European investigative projects have exposed vast networks of these proxy sites, such as Lithuanian disinformation hubs owned by openly pro-Kremlin actors, which operate in tandem to amplify state-sponsored narratives under the guise of independent, local journalism.24

The speed of this ecosystem is enabled by the use of “predictable templates” and ancient cultural tropes, allowing disinformation producers to filter any new event through a familiar, pre-packaged narrative without the requisite time for research.25 Research analyzing over 13,000 cases of Kremlin disinformation identified five core narrative templates used consistently across Europe:

| Narrative Template | Core Mechanism & Psychological Appeal | Typical Application in Cognitive Warfare |

| The Elites vs. The People | Frames covert, hidden decision-makers (e.g., global forums, specific financial families) as adversaries of the common citizen. Appeals to a universal sense of disenfranchisement. | Blaming economic downturns or public health mandates on shadowy globalist agendas, allowing the audience to project their own prejudices onto the “elite”.25 |

| Threatened Values | Depicts Western societies as suffering from severe moral decay, framing Russia as the bulwark of traditional, spiritual, and genuine European virtues. | Labeling liberal democratic policies as extremist ideologies, often equating progressive movements with societal collapse, fascism, or moral abomination.25 |

| Threatened Sovereignty | Claims that targeted nations are virtually entirely controlled by foreign masters (e.g., the US, NATO, the EU), stripping them of true independence. | Used heavily in Eastern Europe and the Baltics to suggest that national governments are mere puppets of Western intelligence agencies, undermining domestic institutional trust.25 |

| The Imminent Collapse | Suggests that the Western world is perpetually on the verge of civil war, economic ruin, or societal breakdown. | Amplifying domestic protests (e.g., the Yellow Vests in France) to project an image of a failing state, thereby discouraging democratic emulation and projecting an aura of Western weakness.25 |

| Hahaganda | A portmanteau of “haha” and “propaganda.” Uses ridicule, sarcasm, memes, and dark humor to discredit foreign leaders and evade serious discussion regarding state actions. | Deflecting blame during international crises (e.g., the Skripal poisoning or human rights abuses) by treating the accusations as absurd, comical, or unworthy of serious geopolitical debate.25 |

By deploying these templates, the Kremlin bypasses factual scrutiny entirely. Audiences are targeted based on sentiment, fears, and wishes; they accept the narrative not because it is factually accurate, but because it neatly aligns with the plot of “Overcoming the Monster,” positioning Russia as the hero against destructive, elite forces.25

3.3 The Doctrine of Reflexive Control

Beneath the superficial layer of disinformation lies the sophisticated strategic doctrine of Reflexive Control. Developed during the Soviet era and heavily modernized by the Russian military for the information age, reflexive control is defined as a means of conveying specially prepared information to an opponent to incline them to voluntarily make a predetermined decision that is advantageous to the initiator.27 It involves the profound manipulation of an adversary’s perception of the world, subtly altering their goals and methods of operation without their conscious realization.27

In the context of cognitive warfare against the West, the Kremlin uses reflexive control to shape the decision-making calculus of NATO leaders and European populations.4 By projecting a carefully curated image of Russian unpredictability, overwhelming military modernization, or the imminent threat of nuclear escalation, Russia attempts to trigger a specific reflex: Western paralysis, hesitation, or self-deterrence.4 If Western analysts fail to recognize the nuances of modern Russian reflexive control, viewing it merely as a relic of Soviet active measures, they risk remaining blind to the highly innovative, tech-enabled ways Russia currently shapes the strategic environment.20 Neutralizing reflexive control requires recognizing the attempt to shape reasoning—identifying the false premises being implanted by the adversary—and systematically rejecting them through physical action and transparent communication.4

4. Western Intelligence and the Technological Counter-Offensive

As the cognitive domain has emerged as decisive terrain, Western military institutions, intelligence agencies, and government bureaus have rapidly evolved their countermeasures. Acknowledging that simply refuting untruths is largely ineffective due to cognitive dissonance and anchoring bias, the West is shifting toward predictive modeling, algorithmic sentiment analysis, and proactive narrative strategies.18

4.1 The Mad Scientist Initiative and DARPA’s Predictive Defense

The U.S. Army’s Mad Scientist Initiative represents a vanguard effort to understand and adapt to the changing character of warfare, specifically regarding weaponized information and the integration of AI.18 Recognizing that human cognition is outpaced by the deluge of algorithmic disinformation, military strategists are integrating AI directly into the Boyd cycle—the Observe, Orient, Decide, and Act (OODA) loop.18

AI systems are deployed to triage vast quantities of data at scale, parsing complex social media environments to detect visual media manipulation, such as deepfakes, before they achieve viral velocity.18 These high-autonomous systems establish context by placing raw observations within historical and cultural frameworks, prioritizing data to prevent human commanders from suffering cognitive overload.18 Furthermore, the initiative emphasizes hardening the resilience of the force and their families, acknowledging that adversarial micro-targeting poses a direct threat to unit cohesion, financial stability, and operational security.18

Simultaneously, the Defense Advanced Research Projects Agency (DARPA) has spearheaded initiatives to simulate and predict online social behavior. The Computational Simulation of Online Social Behavior (SocialSim) program seeks to develop high-fidelity computational simulations to understand how information spreads and evolves, allowing the government to analyze strategic disinformation campaigns without compromising personal privacy.32 Complementary programs, such as Social Media in Strategic Communication (SMISC) and Artificial Social Intelligence for Successful Teams (ASIST), focus on tracking linguistic cues, patterns of information flow, and developing machine “Theory of Mind” (ToM) to infer the goals and situational knowledge of human actors operating within complex digital networks.14 These foundational AI theories are critical for building systems that can detect and neutralize bot-generated content and crowd-sourced deception campaigns.34

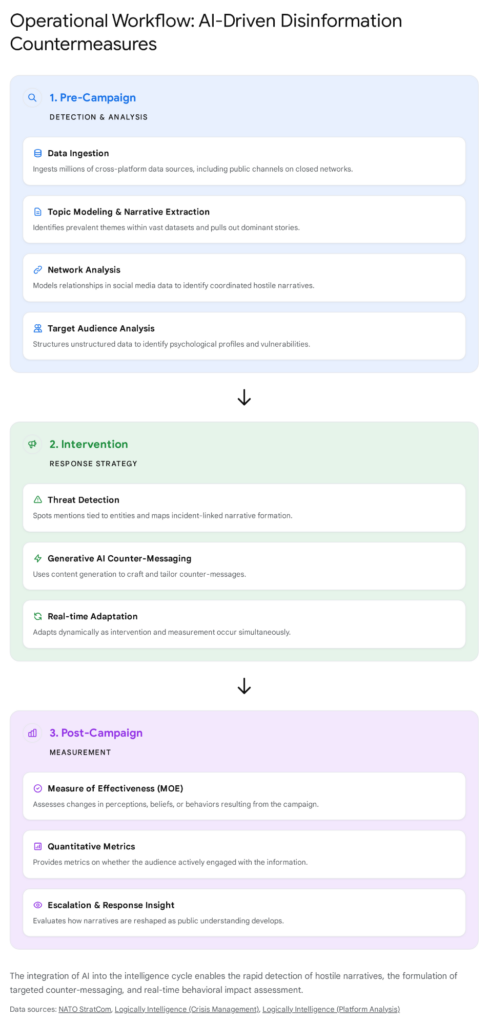

4.2 AI-Driven Sentiment Analysis and Operational Workflows

To continuously contest the information environment, entities such as the NATO Strategic Communications Centre of Excellence (StratCom COE) and the U.S. State Department’s Global Engagement Center (GEC) utilize sophisticated AI models and data processing pipelines.35

The operational workflow for countering Kremlin disinformation relies heavily on transitioning from basic keyword tracking to advanced network and sentiment analysis. AI is utilized to map the digital battlefield, analyzing connections and information diffusion to identify coordinated inauthentic behavior.38 Tools like the Louvain method and k-core decomposition algorithms are deployed to identify specific communities and influential proxy nodes within retweet networks surrounding geopolitical conflicts.38

Crucially, Western intelligence has moved beyond simple “polar sentiment” (positive vs. negative) to analyze “directional sentiment.” This capability allows analysts to understand not just the emotional tone of a conversation, but toward whom or what the sentiment is maliciously directed, exposing the precise targeting parameters of an adversarial campaign.38

The workflow typically follows a structured, intelligence-driven methodology:

- Pre-Campaign Analysis (Observe/Orient): Large Language Models (LLMs) and topic modeling algorithms scan millions of multilingual data points to extract dominant adversarial narratives.38 Target Audience Analysis (TAA) is conducted using sentiment analysis to gauge audience vulnerabilities and psychological profiles, filtering out irrelevant content to generate contextual text embeddings.38

- Intervention (Decide/Act): Leveraging generative AI, communicators craft tailored, culturally resonant counter-messaging that avoids directly repeating the adversary’s claims.18 Platforms like the GEC’s “Disinfo Cloud” serve as centralized hubs, providing access to vetted technologies—ranging from manipulated information assessment tools to dark web monitoring—enabling the rapid deployment of countermeasures by identifying and sharing tools that track propaganda.18

- Measurement of Effectiveness (MOE): Post-intervention, AI sentiment analysis continuously tracks shifts in public perception and behavior, adapting the strategy in real-time based on quantitative engagement metrics and cross-platform behavior analysis.38

4.3 Commercial Platforms in the Cognitive Defense Ecosystem

To support these workflows, intelligence organizations heavily rely on commercial threat intelligence platforms, forming a public-private partnership model essential for cognitive security.42

| Platform | Core Capabilities & Intelligence Applications |

| Cyabra | An AI-powered platform commissioned by NATO StratCom to uncover AI-driven social media manipulation.43 It excels in mapping conflicting locations—identifying geographic clusters of suspicious activity to understand where campaigns truly originate, circumventing adversary VPN usage.45 Its advanced language filter scans interactions across global demographics, measuring positive and negative sentiment regardless of the native tongue, allowing analysts to decode highly localized influence operations.46 |

| Logically Intelligence (LI) | A flagship threat detection tool combining advanced AI and human expertise to map cross-platform data, including closed networks like Telegram.47 LI detects coordinated behavior by tracking timing, pattern alignment, and shared narrative cues.48 It specializes in early pattern shift detection and regional geopolitical signal modeling to capture indicators tied to cross-border tension, allowing stakeholders to move from passive monitoring to active threat prevention before online narratives escalate into offline attacks.49 |

By identifying “lower-volume, distributed activity” that attempts to evade traditional detection parameters—such as the strategic insertion of crafted comments under posts by public figures rather than operating in isolated spam loops—these systems provide a formidable defense against ecosystem-speed narrative warfare.43

5. The OSINT Vanguard and Geolocated Reporting

Perhaps the most disruptive countermeasure to state-sponsored cognitive warfare has been the democratization of intelligence through Open-Source Intelligence (OSINT). Historically, the collection and analysis of intelligence was a highly classified monopoly held by nation-states.51 Today, global networks of civilian practitioners, non-governmental organizations, and specialized investigative outfits utilize publicly available data to penetrate the fog of war, fundamentally altering the global information environment.51

5.1 Debunking Through Transparent Geolocation

Organizations such as Bellingcat have pioneered the use of rigorous geolocation techniques, satellite imagery analysis, and digital forensics to debunk Kremlin narratives in real-time.53 By analyzing public CCTV footage, social media posts, and commercial satellite data (such as Sentinel 2 L1C and PLANET Skysat), OSINT researchers can establish the factual reality of incidents on the ground, bringing unprecedented transparency to conflict zones.53 During severe crises, such as the bombing of the Mariupol theater or the execution of prisoners of war, OSINT networks have published irrefutable evidence linking state actors to the events.55 This capability acts as a powerful deterrent and directly challenges the “factual ambiguity” that adversaries rely upon for plausible deniability, exposing the brazen contradictions in Russian official narratives.53

5.2 Collaborative Dashboards and Information Resilience

The integration of OSINT into broader counter-disinformation strategies is operationalized through collaborative, global dashboards. The “Eyes on Russia” map, managed by the Centre for Information Resilience (CIR), aggregates verified, geolocated data points regarding military movements and conflict incidents.58 This interactive platform allows investigators to visualize data by category, sector, and date, establishing wider contexts and patterns of behavior that are invisible when analyzing isolated incidents.55

Similarly, the #UkraineFacts database, launched by the International Fact-Checking Network, tracks and debunks false reports and disinformation globally.60 Operating across dozens of countries, these platforms provide a vital resource for journalists and policymakers facing the firehose of falsehood.60 By rapidly circulating verifiable, on-the-ground evidence and maintaining detailed archives of human rights violations, the OSINT community erodes the influence of aggressive disinformation campaigns, proving that transparent, crowdsourced truth can effectively neutralize ecosystem-speed cognitive attacks and reshape international sentiment.51

6. Strategic Empathy: Understanding Intent to Prevent Inadvertent Escalation

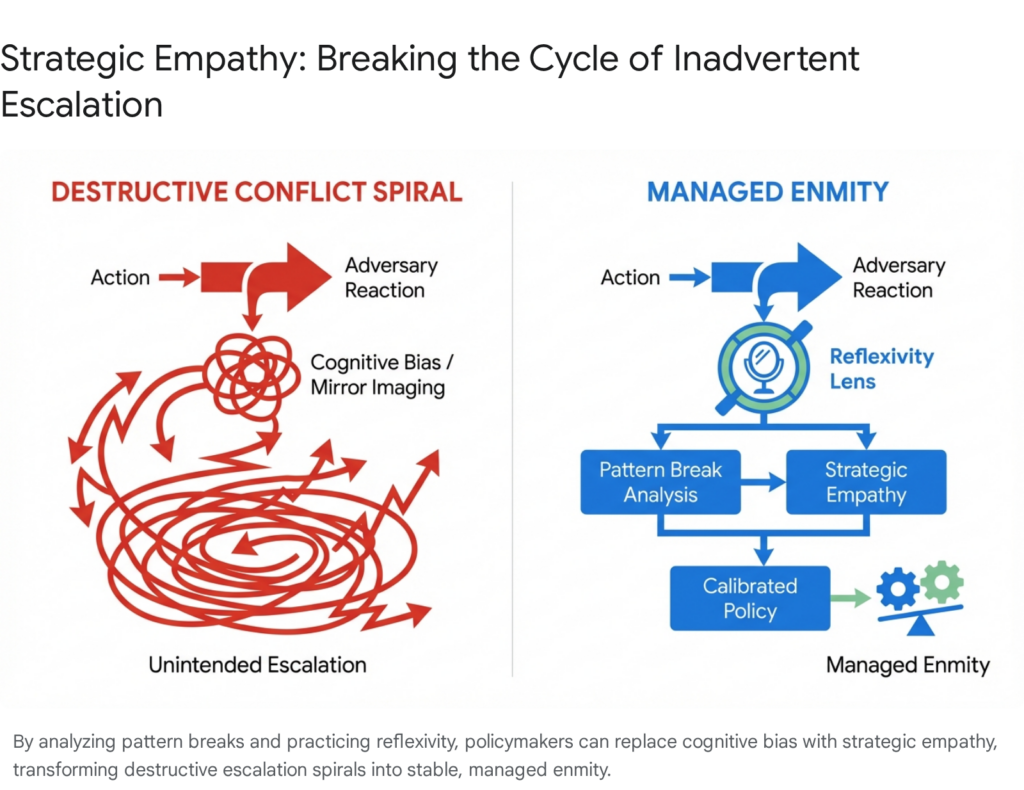

While advanced AI and OSINT provide the tactical tools to detect and counter cognitive warfare, strategic success requires a profound understanding of the adversary’s underlying motivations. Without this understanding, defensive actions can easily trigger the very conflicts they are designed to prevent. This necessitates the rigorous application of “Strategic Empathy.”

6.1 Conceptualizing Strategic Empathy and Reflexivity

In the realm of intelligence and foreign policy, strategic empathy is defined as the sincere effort to identify and assess the genuine patterns of an adversary’s actions—specifically regarding the acquisition, threat, and use of strategic weapons or cognitive warfare tools—and the underlying drivers and constraints that shape those actions.62 Drawing heavily from the work of historian Zachary Shore, strategic empathy functions as a critical analytical lens and a mindset.62

Crucially, strategic empathy is policy agnostic; it is emphatically not synonymous with sympathy, apologism, or agreement with the adversary’s worldview, nor does it seek to justify hostile actions.62 Instead, it is an objective tool used to gain a nuanced understanding of an adversary’s beliefs, will, and intentions, allowing policymakers to transcend both the demonization of the enemy and the assumption of their inherent irrationality.63 By peeling away the layers of official rhetoric and cognitive bias, analysts can accurately interpret how competing narratives create limits on an adversary’s actions or compel them to advance their grand strategy.64

A core methodological approach to building strategic empathy is the examination of “pattern breaks”—surprising, shocking, or high-impact occurrences that deviate from an adversary’s established historical behavior.62 By analyzing why an adversary suddenly shifted tactics or escalated rhetoric (e.g., the invocation of nuclear threat scenarios synchronized with key geopolitical events), intelligence professionals can identify the true drivers of their strategic calculus, testing and refining conventional wisdom.62

A critical component of this process is the practice of “reflexivity,” which requires analysts to view their own nation’s policies and actions from the perspective of the adversary.62 Western strategy has historically been hampered by cognitive bias, analogistic thinking, and a universalist belief that adversaries must naturally view U.S. or NATO actions as inherently defensive and non-threatening.29 Reflexivity forces the acknowledgment that defensive posturing by one state can be genuinely perceived as an existential offensive threat by another. By practicing reflexivity and “red-teaming” scenarios, strategists can identify how their own countermeasures might inadvertently influence an adversary’s constraints or unintentionally provoke fear, leading to an escalatory spiral.62

6.2 Averting the Symmetrical Trap in Geopolitical Conflict

The absence of strategic empathy is frequently cited as a primary catalyst for deterrence failure and the exacerbation of proxy conflicts.29 Misinterpretations of Russian behavior—attributing actions solely to permanent imperial ambition, ideological hostility, or intrinsic irrationality, rather than recognizing the role of perceived geopolitical encirclement or threat escalation technologies—can blind Western policymakers to viable diplomatic off-ramps.29 Historical precedents, such as the U.S. intervention in Afghanistan, underscore how a lack of cognitive empathy and an overreliance on purely rationalist models of power can lead to profound strategic miscalculations regarding an adversary’s resilience and intransigence.69

In the specific context of cognitive warfare, the application of strategic empathy is vital for determining the appropriate mixture of coercive and cooperative policies.62 Understanding the Kremlin’s reliance on the doctrine of reflexive control illuminates a critical insight: symmetrical responses are a strategic trap. If Western democracies attempt to counter the Russian “firehose of falsehood” by deploying their own aggressive disinformation campaigns or mirroring Russian cognitive manipulation, they risk fundamentally degrading the democratic values, institutional trust, and open information environments that they are ostensibly fighting to protect.4 Russia’s overreliance on cognitive warfare has historically caused long-term structural damage to its own society and physical capabilities; mimicking this approach would be disastrous for the West.4

Instead, strategic empathy dictates a posture of “managed enmity”.62 It suggests that the most effective defense against narrative engineering is not counter-manipulation, but radical transparency, societal resilience, and the consistent exposure of adversarial deceits through verifiable truth.18 By understanding the adversary’s intent to provoke a specific, self-destructive reaction, defenders can consciously choose to reject the adversary’s premises, maintain their strategic composure, and neutralize the cognitive threat through decisive, reality-based action.4

Conclusion

The evolution of cognitive warfare has irrevocably altered the landscape of global security. The human mind is no longer merely a participant in conflict; it is the decisive terrain. The Russian Federation’s sophisticated deployment of ecosystem-speed narrative warfare and the relentless “firehose of falsehood” demonstrates a profound commitment to exploiting the structural vulnerabilities of human cognition. By operationalizing cognitive biases and employing the doctrine of reflexive control, adversaries seek to paralyze decision-making, erode societal trust, and secure strategic victories without the deployment of conventional military force.

However, the asymmetry of this battlespace is rapidly narrowing. The integration of artificial intelligence into the intelligence cycle—facilitating predictive target audience analysis, directional sentiment mapping, and the modeling of social physics—empowers Western institutions to detect and dissect hostile narratives before they achieve critical mass. Programs spearheaded by military initiatives and defense agencies ensure that cognitive defense is integrated directly into operational planning. Concurrently, the rise of the civilian OSINT vanguard has effectively shattered the state monopoly on intelligence, utilizing geolocated truth and collaborative verification dashboards as a powerful, transparent deterrent against state-sponsored deception.

Ultimately, technological superiority alone is insufficient to secure the cognitive domain. The successful defense against information warfare requires the disciplined application of strategic empathy. By systematically analyzing pattern breaks and practicing institutional reflexivity, policymakers can accurately interpret adversary intent, sidestep the traps of symmetrical retaliation, and prevent inadvertent military escalation. In the cognitive battlespace, victory is not achieved by manipulating the truth faster than the adversary, but by fortifying the psychological resilience of open societies and transforming destructive informational conflict into managed, predictable competition based on objective reality.

Please share the link on Facebook, Forums, with colleagues, etc. Your support is much appreciated and if you have any feedback, please email us in**@*********ps.com. If you’d like to request a report or order a reprint, please click here for the corresponding page to open in new tab.

Sources Used

- Cognitive Warfare – NATO’s ACT, accessed March 14, 2026, https://www.act.nato.int/activities/cognitive-warfare/

- Cognitive Warfare 2026: NATO’s Chief Scientist Report as Sentinel …, accessed March 14, 2026, https://inss.ndu.edu/Research-and-Commentary/View-Publications/Article/4371195/cognitive-warfare-2026-natos-chief-scientist-report-as-sentinel-call-for-operat/

- Assessing “Cognitive Warfare”, accessed March 14, 2026, https://irregularwarfare.org/articles/assessing-cognitive-warfare/

- A Primer on Russian Cognitive Warfare | ISW, accessed March 14, 2026, https://understandingwar.org/research/cognitive-warfare/a-primer-on-russian-cognitive-warfare/

- Cognitive warfare: a conceptual analysis of the NATO ACT cognitive warfare exploratory concept – PMC, accessed March 14, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC11565700/

- Cognitive Warfare 2026: NATO’s Chief Scientist Report as Sentinel Call for Operational Readiness > Institute for National Strategic Studies > News – INSS, accessed March 14, 2026, https://inss.ndu.edu/Media/News/Article/4371195/cognitive-warfare-2026-natos-chief-scientist-report-as-sentinel-call-for-operat/

- Cognitive Warfare: Definition, Framework, and Case Study – arXiv, accessed March 14, 2026, https://arxiv.org/html/2603.05222v1

- The Challenge of AI-Enhanced Cognitive Warfare: A Call to Arms for a Cognitive Defense, accessed March 14, 2026, https://smallwarsjournal.com/2025/01/22/the-challenge-of-ai-enhanced-cognitive-warfare-a-call-to-arms-for-a-cognitive-defense/

- Chapter 3. Cognitive Biases – Read “Measuring Human Capabilities: An Agenda for Basic Research on the Assessment of Individual and Group Performance Potential for Military Accession” at NAP.edu, accessed March 14, 2026, https://www.nationalacademies.org/read/19017/chapter/7

- research on completing the cognitive bias map – Hosted By One.com | Webhosting made simple, accessed March 14, 2026, https://usercontent.one/wp/www.stimulus.se/wp-content/uploads/2025/11/Research-on-completing-the-Cognitive-Bias-map-v.1.00.pdf?media=1742414512

- Neural Defense — Pentagon’s Brainwave Authentication Project | by, accessed March 14, 2026, https://medium.com/@therealistjug/neural-defense-pentagons-brainwave-authentication-project-aeb239281e9b

- Social Physics: How Social Networks Can Make Us Smarter – Stanford University, accessed March 14, 2026, https://web.stanford.edu/class/cs379c/resources/inverted/content/Books_and_Journal_Articles_on_Consciousness/Social_Physics_How_Social_Networks_Smarter_Pentland/Social_Physics_How_Social_Networks_Smarter_Pentland.epub

- Psychological profiling of world leaders | ORMS Today – PubsOnLine, accessed March 14, 2026, https://pubsonline.informs.org/do/10.1287/orms.2014.06.10/full/

- ASIST: Artificial Social Intelligence for Successful Teams – DARPA, accessed March 14, 2026, https://www.darpa.mil/research/programs/artificial-social-intelligence-for-successful-teams

- Weaponizing cognitive bias in autonomous systems: a framework for black-box inference attacks – PMC, accessed March 14, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC12405252/

- Eksplorium Cognitive Warfare: The Mind as a Battlefield, accessed March 14, 2026, https://cris.unibo.it/retrieve/handle/11585/1018240/a3aaa70d-8c53-4be7-88a8-28ef12a26d1c/

- Identifying cognitive biases to harden in a context of cognitive warfare – ANTIGONE – ANR, accessed March 14, 2026, https://anr.fr/Project-ANR-22-ASGC-0006

- 277. Insights from the Mad Scientist Weaponized Information Series of Virtual Events, accessed March 14, 2026, https://madsciblog.tradoc.army.mil/277-insights-from-the-mad-scientist-weaponized-information-series-of-virtual-events/

- Surveying New Battlegrounds: Ukraine and the Future of Cognitive Warfare, accessed March 14, 2026, https://digitalcommons.usf.edu/jss/vol18/iss4/12/

- Cognitive Biases and Reflexive Control – eGrove, accessed March 14, 2026, https://egrove.olemiss.edu/cgi/viewcontent.cgi?article=1729&context=hon_thesis

- The Russian “Firehose of Falsehood” Propaganda Model – RAND, accessed March 14, 2026, https://www.rand.org/pubs/perspectives/PE198.html

- Kremlin Disinformation Discourse: Media Coverage of the Plane Hijack by Belarus on 23 May 2021 – MDPI, accessed March 14, 2026, https://www.mdpi.com/2673-5172/3/3/34

- Cause for concern: The continuing success and impact of Kremlin disinformation campaigns – Hybrid CoE, accessed March 14, 2026, https://www.hybridcoe.fi/wp-content/uploads/2024/03/20240306-Hybrid-CoE-Working-Paper-29-The-impact-of-Kremlin-disinformation-WEB.pdf

- Firehose of Falsehood – VSquare.org, accessed March 14, 2026, https://vsquare.org/firehose-of-falsehood-russia-disinformation-propaganda-europe/

- Narrating Disinformation: The Templates for Kremlin Lies, accessed March 14, 2026, https://www.ui.se/globalassets/ui.se-eng/publications/sceeus/narrating-disinformation-the-templates-for-kremlin-lies-framsida.pdf

- Vol-25-No-1371 – December 17, 2022 – Flipbook by ሪፖርተር ማህደር | FlipHTML5, accessed March 14, 2026, https://fliphtml5.com/qhkn/xahc/Vol-25-No-1371_-_December_17%2C_2022/

- Russian Military Thought: Concepts and Elements – Army University Press, accessed March 14, 2026, https://www.armyupress.army.mil/Portals/7/Hot-Spots/docs/Russia/Mitre-Thomas.pdf

- The Soviet theory of reflexive control in historical and psychocultural perspective: preliminary study – Calhoun, accessed March 14, 2026, https://calhoun.nps.edu/server/api/core/bitstreams/f770a2ad-2f1b-48c8-9d8b-a34a5be2df1d/content

- U.S.-Russia Proxy War in Ukraine Is a Case of Deterrence Failure – Country Indicators for Foreign Policy (CIFP) – Carleton University, accessed March 14, 2026, https://carleton.ca/cifp/2024/u-s-russia-proxy-war-in-ukraine-is-a-case-of-deterrence-failure/

- The Convergence – The Army’s Mad Scientist Podcast, accessed March 14, 2026, https://podcasts.apple.com/us/podcast/the-convergence-the-armys-mad-scientist-podcast/id1495100075

- Mad Scientist Laboratory – … Exploring the Operational Environment, accessed March 14, 2026, https://madsciblog.tradoc.army.mil/

- SocialSim: Social Simulation for Evaluating Online Messaging Campaigns – DARPA, accessed March 14, 2026, https://www.darpa.mil/research/programs/computational-simulation-of-online-social-behavior

- DARPA Targets Social Media to Root-Out Enemy Disinformation and Propaganda – DSIAC, accessed March 14, 2026, https://dsiac.dtic.mil/articles/darpa-targets-social-media-to-root-out-enemy-disinformation-and-propaganda/

- SMISC: Social Media in Strategic Communication – DARPA, accessed March 14, 2026, https://www.darpa.mil/research/programs/social-media-in-strategic-communication

- Cognitive Warfare | NATO Science and Technology Organization, accessed March 14, 2026, https://www.sto.nato.int/document/cognitive-warfare/

- AI in Precision Persuasion. Unveiling Tactics and Risks on Social Media, accessed March 14, 2026, https://stratcomcoe.org/publications/ai-in-precision-persuasion-unveiling-tactics-and-risks-on-social-media/309

- About Us – Technology Engagement Team – State Department, accessed March 14, 2026, https://2017-2021.state.gov/about-us-technology-engagement-team/

- AI in Support of StratCom Capabilities – NATO Strategic …, accessed March 14, 2026, https://stratcomcoe.org/publications/download/Revised-AI-in-Support-of-StratCom-Capabilities-DIGITAL—Copy.pdf

- Interim Report and Third Quarter Recommendations – DTIC, accessed March 14, 2026, https://apps.dtic.mil/sti/pdfs/AD1112059.pdf

- Technology Solutions – Technology Engagement Division – United States Department of State, accessed March 14, 2026, https://2021-2025.state.gov/technology-engagement-division/technology-solutions/

- A National Cloud for Conducting Disinformation Research at Scale Saiph Savage Cristina Martínez Pinto June 2021 – FAS.org, accessed March 14, 2026, https://fas.org/wp-content/uploads/2021/06/disinfo-cloud.pdf

- Disinformation Countermeasures and Artificial Intelligence | Frontiers Research Topic, accessed March 14, 2026, https://www.frontiersin.org/research-topics/58930/disinformation-countermeasures-and-artificial-intelligence/magazine

- NATO StratCom COE Commissions Cyabra to Uncover AI-Driven Social Media Manipulation in Major 2026 Report – GlobeNewswire, accessed March 14, 2026, https://www.globenewswire.com/news-release/2026/02/11/3236334/0/en/NATO-StratCom-COE-Commissions-Cyabra-to-Uncover-AI-Driven-Social-Media-Manipulation-in-Major-2026-Report.html

- Compare Cyabra vs. iReview in 2026, accessed March 14, 2026, https://slashdot.org/software/comparison/Cyabra-vs-iReview/

- Introducing: Cyabra’s New Conflicting Locations Feature Exposes Deception, accessed March 14, 2026, https://cyabra.com/blog/introducing-cyabras-new-conflicting-locations-feature-exposes-deception/

- Amplified Intelligence: Cyabra’s Platform Updates, accessed March 14, 2026, https://cyabra.com/blog/amplified-intelligence-cyabras-platform-updates/

- Code of Practice on Disinformation – Report of Logically for the period June – December 2022 – Transparency Centre, accessed March 14, 2026, https://disinfocode.eu/reports/download/32

- Reputation and Crisis Management – Logically.ai, accessed March 14, 2026, https://logically.ai/outcomes/reputation-crisis-management

- Threat Prevention – Logically.ai, accessed March 14, 2026, https://logically.ai/outcomes/threat-prevention

- From Online Narratives to Offline Attacks: How Logically Helps Detect the Threat, accessed March 14, 2026, https://logically.ai/case-studies/from-online-narratives-to-offline-attacks

- OSINT in an Age of Disinformation Warfare | Royal United Services Institute – RUSI, accessed March 14, 2026, https://www.rusi.org/explore-our-research/publications/commentary/osint-age-disinformation-warfare

- Bellingcat’s Eliot Higgins Explains Why Ukraine Is Winning the Information War – TIME, accessed March 14, 2026, https://time.com/6155869/bellingcat-eliot-higgins-ukraine-open-source-intelligence/

- OSINT’s influence on the Russian air campaign in Ukraine and the implications for future Western deployments – Atlantic Council, accessed March 14, 2026, https://www.atlanticcouncil.org/content-series/airpower-after-ukraine/osints-influence-on-the-russian-air-campaign-in-ukraine-and-the-implications-for-future-western-deployments/

- Bellingcat – Wikipedia, accessed March 14, 2026, https://en.wikipedia.org/wiki/Bellingcat

- How OSINT shaped reporting on the war in Ukraine – Centre for Information Resilience, accessed March 14, 2026, https://www.info-res.org/eyes-on-russia/articles/how-osint-shaped-reporting-on-the-war-in-ukraine/

- Full article: The War on Open-Source Intelligence – Taylor & Francis, accessed March 14, 2026, https://www.tandfonline.com/doi/full/10.1080/0163660X.2025.2554477

- Disinformation and war : How the Violent War in Ukraine Illustrates the Power of Disinformation 1 – Democratic Erosion Consortium, accessed March 14, 2026, https://democratic-erosion.org/2025/04/27/disinformation-ukraine-war/

- Reflections on the Russia-Ukraine War – OAPEN Library, accessed March 14, 2026, https://library.oapen.org/bitstream/handle/20.500.12657/87676/9789400604742.pdf?sequence=8&isAllowed=y

- Eyes on Russia: Documenting Russia’s war on Ukraine – Centre for Information Resilience, accessed March 14, 2026, https://www.info-res.org/eyes-on-russia/articles/eyes-on-russia-documenting-russias-war-on-ukraine/

- Investigating Russia Around the World: A GIJN Toolkit, accessed March 14, 2026, https://gijn.org/resource/investigating-russia-around-the-world-a-gijn-toolkit/

- UKRAINE – Migration and Home Affairs, accessed March 14, 2026, https://home-affairs.ec.europa.eu/document/download/c55cf40d-8d3c-4465-817d-29cfb61ac7e4_en?filename=ran_spotlight_on_ukraine_062022_en.pdf

- Strategic Empathy: Examining Pattern Breaks to Better Understand …, accessed March 14, 2026, https://nonproliferation.org/strategic-empathy/

- Research Report 2023: Strategic Empathy – Middlebury, accessed March 14, 2026, https://www.middlebury.edu/media/35551

- Building Strategic Empathy for Great Power Competition – Global Taiwan Institute, accessed March 14, 2026, https://globaltaiwan.org/2024/05/building-strategic-empathy-for-great-power-competition/

- “Understanding the Adversary: Strategic Empathy and Perspective Taking in National Security” > US Army War College, accessed March 14, 2026, https://ssi.armywarcollege.edu/SSI-Media/Recent-Publications/Article/3947825/understanding-the-adversary-strategic-empathy-and-perspective-taking-in-nationa/

- Decoding manipulative narratives in cognitive warfare: a case study of the Russia-Ukraine conflict – PMC, accessed March 14, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC12460417/

- U.S.-Russia Proxy War in Ukraine: A Case of Deterrence Failure, accessed March 14, 2026, https://peacediplomacy.org/2025/01/23/u-s-russia-proxy-war-in-ukraine-a-case-of-deterrence-failure/

- Strategic empathy & the roots of the Ukraine War | Center for International Studies – CIS, accessed March 14, 2026, https://cis.mit.edu/news/strategic-empathy-roots-ukraine-war

- Strategic Empathy – NewAmerica.org, accessed March 14, 2026, https://static.newamerica.org/attachments/4350-strategic-empathy-2/Waldman%20Strategic%20Empathy_2.3caa1c3d706143f1a8cae6a7d2ce70c7.pdf