1. Executive Summary

The United States Department of Defense (DoD) is actively pursuing a fundamental transformation in its force structure, transitioning from a reliance on exquisite, manned, high-cost platforms toward the mass deployment of small, attritable, autonomous systems. Initiatives such as the Replicator program mandate the fielding of thousands of these systems across multiple domains within an aggressive 18-to-24-month timeline.1 This strategic pivot is largely a response to the “intelligentization” of competitor forces, specifically the People’s Liberation Army (PLA), which aims to leverage artificial intelligence (AI) and advanced technologies to offset traditional U.S. conventional advantages.3 However, an over-fixation on the physical hardware—airframes, propulsion, and payload—has obscured a far more complex systemic bottleneck: the algorithmic architecture required to ensure these systems operate legally, ethically, and safely in contested environments.

Designing and manufacturing a drone is a largely solved engineering problem. Encoding the Law of Armed Conflict (LOAC) and mission-specific Rules of Engagement (ROE) into a machine-learning algorithm is not.5 The current strategic posture risks fielding capabilities that possess high degrees of kinetic lethality but lack the deterministic boundaries required to comply with international humanitarian law (IHL) and prevent unintended escalation. The operational reality is that autonomous systems can respond to threats faster than a human military force can perceive, orient, decide, and act, which drives the immense pressure for their rapid deployment.7 Yet, without deliberate systemic safeguards, this acceleration introduces unprecedented risks to strategic stability.

This report provides a detailed analysis of the legal, technical, and operational hurdles inherent in deploying autonomous weapon systems (AWS). It examines the friction between the probabilistic nature of modern AI and the rigid, deterministic requirements of military law.8 It evaluates the necessity of shifting legal oversight directly into the software design phase 9, the continuous nature of algorithmic testing and evaluation (T&E) 10, and the severe risks of crisis instability when autonomous systems interact at machine speeds.11 Finally, it outlines the specific policy adaptations and oversight structures leadership must mandate to responsibly govern human-machine teaming (HMT) and lethal autonomy, moving beyond abstract ethical principles toward executable engineering standards.12

2. The Strategic Context and the Hardware Fallacy

The strategic imperative driving the integration of autonomous systems is clear: competitors are heavily investing in AI and autonomous swarm technologies to offset traditional U.S. advantages.2 To counter adversarial advantages in mass, particularly the anti-access/area-denial (A2/AD) capabilities deployed in the Indo-Pacific, the DoD has prioritized the rapid development of All-Domain Attritable Autonomous (ADA2) systems.1

2.1. The Replicator Initiative and the Demand for Mass

Launched by the Deputy Secretary of Defense, the Replicator initiative seeks to catalyze progress in a military innovation cycle that has historically been too slow, shifting the focus to platforms that are “small, smart, cheap, and many”.1 The first iteration, Replicator 1, focuses on fielding thousands of uncrewed systems across aerial, ground, maritime, and space domains, selecting systems like AeroVironment’s Switchblade 600, Anduril’s Altius-600 and Ghost-X, and Performance Drone Works’ C-100.15 The subsequent phase, Replicator 2, targets counter-small unmanned aerial systems (C-sUAS), drawing heavily on operational lessons from contemporary battlefields such as the conflict in Ukraine.15

Despite these clear programmatic goals, public and institutional discourse often defaults to a hardware-centric paradigm. Strategic planners and acquisition professionals frequently focus on range, payload capacity, unit cost, and aerodynamic performance. This approach overlooks the reality that an advanced autonomous system is primarily a software platform housed within a physical shell. The true measure of a system’s combat readiness is not its mechanical reliability, but the maturity of its computer vision models, the resilience of its data fusion algorithms against electromagnetic interference, and the operational integrity of its targeting logic.16

2.2. The Shift to Algorithmic Warfare

When autonomous systems are deployed to execute complex missions in denied electromagnetic environments without continuous communication links, the software becomes the sole arbiter of lethal force.2 If the system’s foundational models have not been rigorously trained to distinguish between a functional anti-aircraft battery and a destroyed civilian vehicle resembling one, the hardware’s kinetic capabilities are irrelevant; the deployment becomes an immediate legal liability and a strategic risk.18

Advances in military applications of AI further strengthen the convergence between the cyber domain of operations (digital code) and the electromagnetic environment (electrons).16 In a crowded and contested spectrum, the distinction between a conventional kinetic attack and a cyber-attack blurs. Adversaries can target model weights through espionage, poison training datasets, spoof sensors on intelligence, surveillance, and reconnaissance (ISR) platforms, or disable data relays.16 The systemic requirement, therefore, is not merely to build a drone, but to construct an entire software assurance lifecycle that moves at the speed of code, rather than the traditional, multi-year acquisition cycles designed for aircraft carriers and fighter jets.10

3. The Legal and Ethical Mandates Governing Autonomy

The deployment of autonomous and semi-autonomous systems is governed by a strict, evolving framework of international and domestic directives. Leadership must recognize that algorithmic weapon systems do not exist in a legal vacuum; they must navigate the same complex web of international treaties, customary law, and domestic policy that governs human warfighters.

3.1. DoD Directive 3000.09 and Definitional Clarity

The foundational document within the DoD is(https://www.esd.whs.mil/portals/54/documents/dd/issuances/dodd/300009p.pdf), which was significantly updated in January 2023 to address the rapid advancements in AI.12 The directive establishes that all autonomous and semi-autonomous weapon systems must be designed to allow commanders and operators to exercise “appropriate levels of human judgment over the use of force”.13

A critical element of this directive is its definitional precision. It differentiates between semi-autonomous systems—which engage specific targets or specific target groups that have been selected by a human operator (e.g., lock-on-after-launch or “fire and forget” munitions)—and fully autonomous weapon systems, which, once activated, can select and engage targets without further human intervention.13 The directive also mandates that the integration of AI capabilities must align with the DoD’s Responsible AI (RAI) Ethical Principles, which dictate that systems must be responsible, equitable, traceable, reliable, and governable.22 Systems must be subjected to rigorous verification and validation (V&V) before deployment to minimize the probability and consequences of failures that could lead to unintended engagements.12

3.2. Integration of the Law of Armed Conflict (LOAC)

Beyond domestic directives, any weapon system deployed by U.S. forces must comply with the core tenets of the LOAC, which is heavily rooted in the 1949 Geneva Conventions and their 1977 Additional Protocols.14 The legality of AWS under IHL ultimately hinges on their capacity to adhere to these foundational principles:

- Distinction: The absolute requirement to differentiate between lawful military objectives (combatants and military equipment) and protected civilian persons or objects.14

- Proportionality: The requirement that the anticipated civilian harm or collateral damage must not be excessive in relation to the concrete and direct military advantage anticipated from the attack.14

- Precaution: The obligation to take all feasible measures in the planning and execution of an attack to avoid, or minimize, civilian harm.14

- The Martens Clause: A fallback principle stating that in cases not covered by international agreements, civilians and combatants remain under the protection of the principles of humanity and the dictates of public conscience.14

While semi-autonomous systems rely on human operators to fulfill these legal obligations prior to launch, fully autonomous systems shift the immense burden of compliance entirely onto the algorithm.9

3.3. Historical Precedents and the Accountability Gap

Although the term “autonomous weapons” conjures modern imagery of swarming drones, the underlying legal concept is not entirely novel. Battlefields have long been shaped by autonomous mechanisms like drifting naval mines, torpedoes, and victim-activated landmines designed to strike targets without real-time human input.14 The 1997 Ottawa Convention prohibits anti-personnel mines precisely because they are inherently indiscriminate; they cannot distinguish between a combatant’s footstep and a child’s.14 However, anti-vehicle mines remain permitted under specific conditions, highlighting that the international community has historically regulated autonomy based on the capability of the weapon to adhere to the principle of distinction.14

The modern challenge is that AI-driven AWS are vastly more complex than pressure-plate mines. As algorithms begin to make decisions that determine lethality, they force a re-examination of accountability.14 If an autonomous system commits an IHL violation, existing criminal liability systems—designed to judge human intent, negligence, and mens rea—are ill-equipped to handle the distribution of responsibility among programmers, procurement officers, and the battlefield commanders who activated the system.14 This accountability gap deprives victims of justice and undermines the preventive power of international law.14

4. The Algorithmic Translation of Legal Frameworks

The core technical challenge facing the defense engineering establishment is the translation of abstract, qualitative legal concepts into quantitative, explicit algorithmic logic.8 LOAC was drafted by humans, for human interpretation, relying heavily on contextual understanding, reasonable judgment, and situational nuance.6 A machine cannot intuitively understand context; it can only execute code.

4.1. The Conflict Between Probabilities and Deterministic Law

Modern machine learning, particularly the deep neural networks utilized for computer vision and autonomous target acquisition, operates fundamentally on statistical probabilities, not deterministic rules.8 An algorithm does not possess semantic knowledge that a target is an enemy tank; rather, it calculates a mathematical probability (e.g., 92% confidence) that a specific cluster of pixels within its sensor feed matches the distribution of its training data labeled as “tank”.19

This probabilistic nature is inherently at odds with strict legal thresholds. If a targeting algorithm operates with an 8% error rate, and that statistical error results in a kinetic strike on a civilian structure, the probabilistic nature of the system offers no legal defense under IHL. Furthermore, while an AI might be trained to recognize the Distinction between a soldier holding a rifle and a civilian holding a rake, the principle of Proportionality requires an incredibly complex value judgment. How does an algorithm assign a numerical, calculable value to abstract concepts like “anticipated military advantage” versus “collateral damage estimation”?9 Algorithms are currently incapable of understanding hostile intent from body language, deducing the strategic value of a target in a broader campaign, or recognizing subtle cues of surrender (rendering a target hors de combat).9

These deficiencies are amplified in non-international armed conflicts and urban warfare, where combatants frequently operate without uniforms among the civilian population. In such environments, AI models are highly susceptible to pattern-recognition failures, especially if they encounter conditions that differ markedly from their sterile training datasets.18

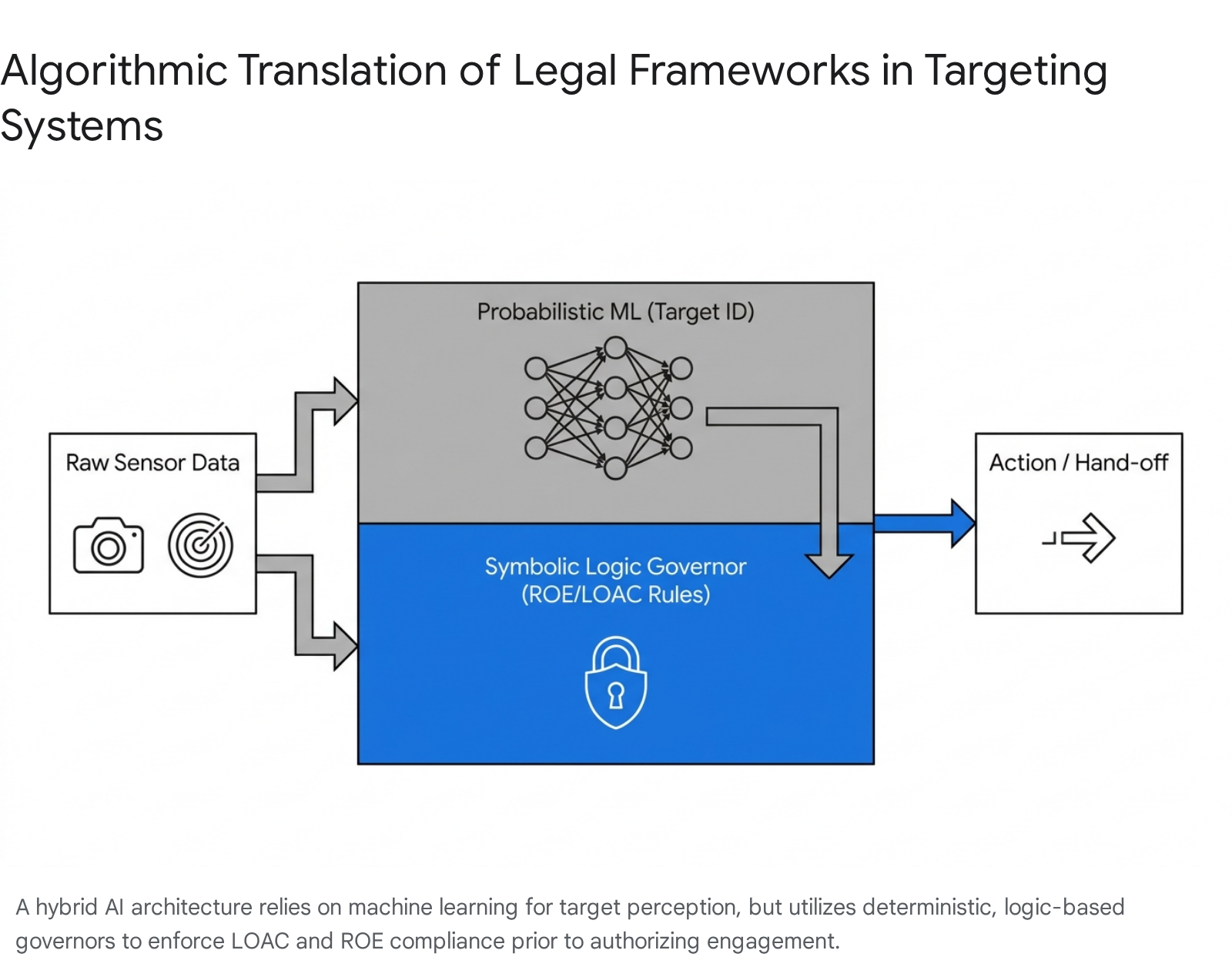

4.2. Probabilistic vs. Logic-Based Modeling

To resolve the friction between statistical probabilities and legal boundaries, system developers must look beyond purely statistical machine learning and incorporate formal methods or logic-based modeling. The two dominant machine learning paradigms—imitation learning and reinforcement learning—can produce highly capable systems, but neither inherently preserves the kind of strict constraint satisfaction required by law.26

| Modeling Approach | Characteristics | Application in Autonomous Weapons | Limitations |

| Probabilistic (Machine Learning/Deep Learning) | Data-driven, statistical pattern recognition, relies on massive datasets, operates as a “black box.” | Target identification, dynamic navigation, anomaly detection, multi-sensor data fusion. | Unpredictable in novel environments; lacks interpretability; cannot process abstract legal or ethical concepts natively. |

| Logic-Based (Symbolic AI/Formal Methods) | Rule-based, deterministic, transparent decision trees, strict “if/then” constraints, mathematically verifiable. | Establishing hard operational boundaries, geofencing, enforcing “do not fire” constraints, verifying system states. | Brittle; struggles with highly nuanced, noisy, or unexpected inputs that are not explicitly programmed into the ruleset. |

| Hybrid Architecture (Neuro-Symbolic) | Combines neural networks for perception with symbolic logic for constraint enforcement. | ML identifies the target probabilistically; symbolic logic checks this identification against hardcoded ROE before engagement is authorized. | Highly complex to engineer; potential latency in processing decisions at the tactical edge; requires translation of ROE into code. |

Table 1: Comparative analysis of modeling approaches for integrating operational logic in military AI.

A hybrid architecture is increasingly recognized as a vital pathway forward. The machine learning model provides the sensory processing and perception, while a logic-based “governor” ensures the output complies with predefined rules.8

4.3. Digital Rules of Engagement

Rules of Engagement are a positive statement of intent, underpinned by legal, policy, capability, and operational factors that are specific to a particular theater of operations.28 They provide commanders with control over the implementation of force and provide warfighters with clear guidelines on permissible actions.28

Developing “Algorithmic ROE” involves creating machine-readable constraints that can be adjusted dynamically based on the theater of operations.25 For an autonomous system to be viable, it must be able to accept a digital ROE card that restricts its geographic boundaries, limits its weapon release authority based on positive identification thresholds (e.g., requiring a 95% confidence score for a military vehicle, but a 99% score if human presence is detected), or mandates a hand-off to a human operator if uncertainty crosses a specific threshold.19

However, current academic and military discourse indicates that even if specific algorithmic ROE cards were created for tactical use, there is no certainty that AWS at their current level of technological development could properly interpret and apply these constraints in chaotic battlefield conditions.25 The translation of ROE into code is not merely a programming task; it is a profound legal translation that requires multidisciplinary oversight.

5. Shifting Legal Oversight Left: The Redefined Role of Judge Advocates

The traditional military acquisition and operational process involves Judge Advocates (JAs) conducting legal reviews of weapon systems after they are developed, usually just prior to fielding or during the operational planning phase. In the context of autonomous AI, this arms-length, post-development review is deeply flawed, outdated, and often legally inadequate.9

5.1. The Laboratory as the New Battlefield for LOAC

Because AI algorithms learn from their training data, the goals, parameters, and constraints guiding a learner’s decisions are established in the laboratory, long before a conflict exists.9 The design timeframe is the most critical period because it establishes the foundational logic of the system. Spotting LOAC issues at this stage is absolutely necessary.9

If an algorithm is trained in a civilian or sterile laboratory environment without specific, coded parameters penalizing the targeting of protected objects or individuals who are hors de combat, the final model will inherently lack that legal distinction.9 Relying solely on ad hoc requests for legal support or end-stage weapons reviews ignores how autonomy transforms battlefield LOAC concerns into laboratory LOAC concerns.9

5.2. Judge Advocates as Combat Advisors in Design

To address this systemic flaw, leadership must mandate a cultural and procedural shift, transitioning JAs from being mere end-stage “reviewers” to active “combat advisors” embedded directly within software engineering and design teams.9

By partnering with data scientists and technologists at entities like Army Futures Command (AFC) or the Defense Innovation Unit (DIU), JAs can spot LOAC issues during the nascent stages of technology development.9 They provide critical value during the requirements phase by ensuring that an agency’s official needs adequately capture the necessary parameters for LOAC compliance.9

Furthermore, these JA-engineering teams must define explicit “human touchpoints” within the system architecture. They must clearly delineate where an AI is legally permitted to execute autonomously, and where the law dictates that a human presence or intervention is legally or operationally required before lethal force is applied.9 This early integration prevents the costly and operationally disastrous reality of engineering an exquisite, multi-million-dollar AI system only to have it barred from deployment due to fundamental LOAC incompatibilities discovered during a final, inflexible legal review.

6. Data Logistics and the Reality of Synthetic Environments

Autonomous systems are fundamentally bound by the quality, variety, structure, and integrity of their training data.7 The systemic requirement to build, deploy, and evolve these systems demands massive data logistics, an area where the DoD is currently facing significant friction.

6.1. The Scale of the Data Challenge and Data Masking

The application of computer vision for target acquisition of military combat vehicles requires ample, highly accurate labeled data.29 The scale of this requirement is staggering; the National Geospatial-Intelligence Agency (NGA) is currently prepping a data-labeling effort estimated to cost nearly $800 million, reflecting the immense resources required to annotate images to train machine-learning models.30

However, data aggregation from multiple classified and unclassified sources often results in datasets that are not formatted for immediate use, slowing down the AI modeling process.31 A significant hurdle is classification. Army investments in data-masking research are critical; employing software tools that can mask sensitive information allows for datasets to be declassified and used safely in unclassified AI-modeling environments, vastly expanding the pool of available training data.31

6.2. Training on the Edge of Reality: Synthetic Data

Acquiring labeled data of adversary combat vehicles in diverse, realistic combat environments (e.g., heavy fog, night operations, dense urban clutter, active electronic warfare) is practically impossible to achieve purely through real-world collection. To bridge this critical gap, the DoD and defense contractors rely heavily on synthetic data generated through tools like Unreal Engine 5, generative AI, and Stable Diffusion.19

Combining real and synthetic data improves object detection performance significantly.29 However, synthetic environments carry inherent risks. If the synthetic data inadvertently encodes biases, lacks sufficient variance, or fails to accurately represent the complex physical realities of the electromagnetic spectrum (such as infrared signatures and thermal bleed), the model will experience severe performance degradation when transferred to a live combat environment.19 A model that performs flawlessly in a sanitized simulation may fail catastrophically when confronted with the noisy, chaotic data of a real-world battlefield.

[Insert image of a system architecture diagram illustrating the data pipeline: from raw sensor collection and synthetic data generation, through data masking and labeling, leading to model training and deployment to the tactical edge]

6.3. Tactical Bandwidth and Model Decay

A critical, often overlooked vulnerability of military AI is the bandwidth constraint at the tactical edge.19 In a denied, degraded, intermittent, or limited (DDIL) environment, maintaining continuous, high-bandwidth communication with forward-deployed autonomous swarms is highly unlikely.

If an adversary introduces a new countermeasure, changes their camouflage techniques, or if the operational environment shifts rapidly (e.g., weather changes affecting sensor fidelity), the deployed AI model may begin to suffer from “model drift”.19 The algorithm’s accuracy degrades, increasing the risk of false positives, fratricide, or civilian casualties. Because neural network updates are data-heavy, pushing a new, retrained model to a drone mid-flight or deep within a contested zone is technologically challenging.19

Leadership must recognize that an autonomous system’s legal compliance has an operational expiration date. Without the reliable ability to update models in theater, systems must be programmed with graceful degradation protocols—automatically reducing their level of autonomy, reverting to safer baselines, or returning to base when their internal confidence scores drop below legally permissible thresholds.10

7. The Lifecycle: Redefining Test and Evaluation (T&E)

The historical DoD paradigm of acquiring software via “block upgrades” every few years is entirely obsolete in the age of algorithmic warfare.32 As adversaries rapidly adapt to U.S. AI behaviors and capabilities, the U.S. military must be prepared to update algorithms in a matter of hours or days, not months or years.32 This reality requires a radical overhaul of the DoD Test and Evaluation (T&E) frameworks.

7.1. Moving from Static Testing to the T&E Continuum

The former director of the Joint Artificial Intelligence Center (JAIC) has noted that the Pentagon is not yet well-postured for the T&E of AI, which requires continuous updating.32 If an AI system is not updated continuously, “it’s going to go stale. It’s not going to work as advertised. The adversary is going to corrupt it, and it’ll be worse than not having AI in the first place”.32

To address this, the Developmental Test and Evaluation (DT&E) of Autonomous Systems Guidebook establishes that autonomous systems require a “T&E continuum”.10 Because self-learning systems adapt dynamically to new data and changing environments, a system deemed safe and LOAC-compliant on a Tuesday may exhibit unpredictable, non-compliant behavior by a Thursday.10 Continuous Testing (CT) replaces rigid, static milestones, relying on iterative testing where models are evaluated and refined as new data emerges.10

This process requires decomposing the dynamic observe-orient-decide-act (OODA) loop of the algorithm to evaluate exactly how the system perceives its environment, processes information, and makes decisions.10 The Chief Digital and Artificial Intelligence Office (CDAO) is currently producing best practices and an Assurance Case Framework for Trustworthy AI to guide these exact processes.33

7.2. Runtime Assurance and Adversarial Testing

To safely field these systems despite their inherent unpredictability, the DoD employs Runtime Assurance (RTA) mechanisms.10 RTA acts as a separate, highly verified software monitor that runs parallel to the complex AI model in real-time. If the AI proposes an action that violates its safety bounds, geofences, or programmed ROE constraints, the RTA intervenes, overriding the AI and returning the system to a safe, pre-approved baseline state.10

Furthermore, T&E must heavily involve continuous adversarial testing.10 Testers must act as the enemy, actively attempting to poison the training datasets, spoof the sensors, exploit algorithmic biases, or introduce chaotic variables.10 The goal is to identify exploitable vulnerabilities before deployment and ensure that when the system inevitably encounters adversarial interference, it fails safely rather than catastrophically.

8. Command Architecture and Human-Machine Teaming

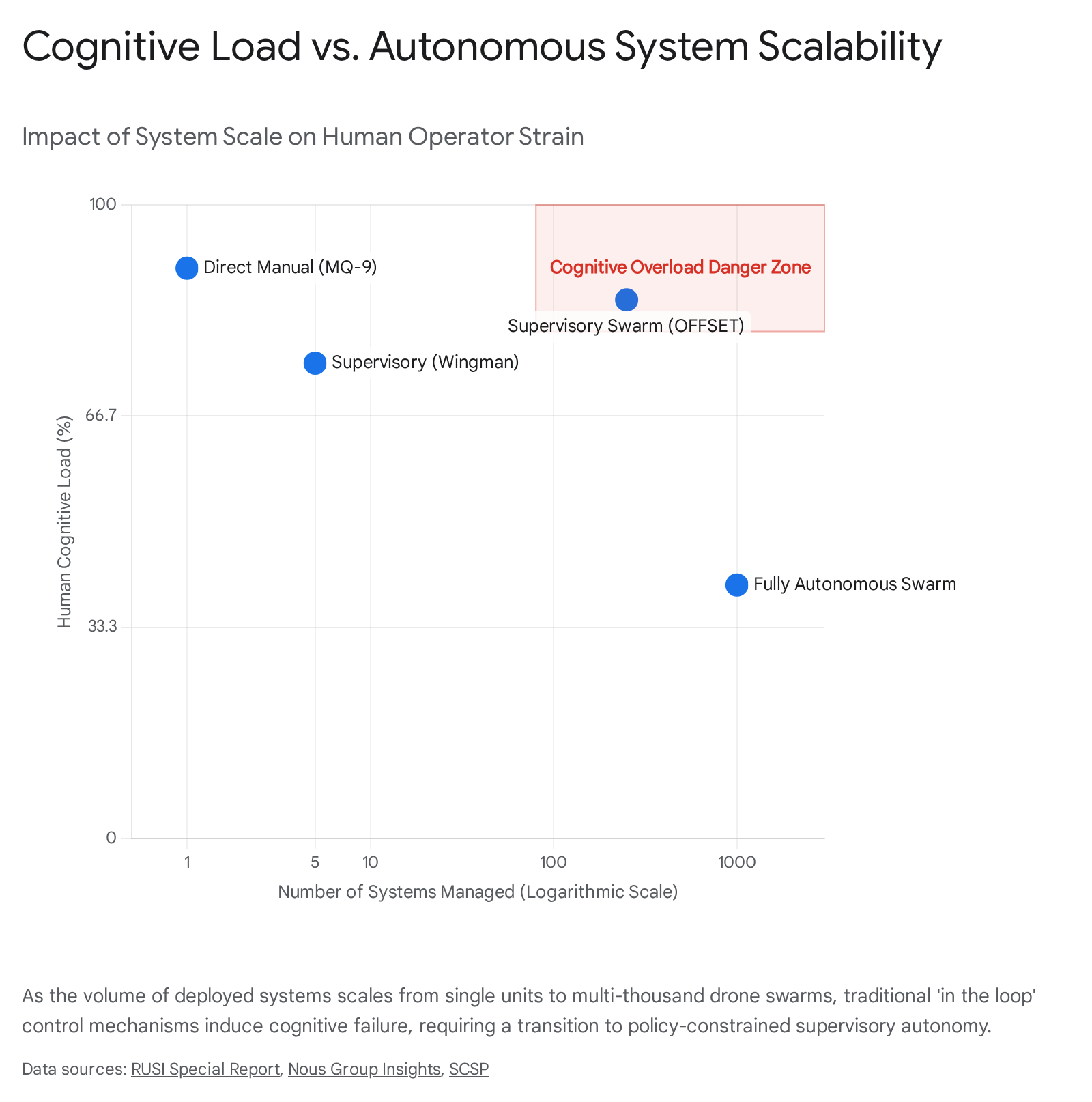

The deployment of thousands of attritable autonomous systems—the core goal of Replicator—inherently alters the structure of military command and control (C2).35 The traditional paradigm of one human operator remotely piloting one drone (e.g., an MQ-9 Reaper) is mathematically and logistically impossible at the scale currently envisioned.37

8.1. Redefining Human Control and Cognitive Load

To manage mass, operators must transition from being “in the loop” (direct manual control of every action) to “on the loop” (supervisory control), managing entire fleets or swarms of systems simultaneously.35 This constitutes the evolution of Human-Machine Teaming (HMT), which combines human strategic intent, contextual awareness, and moral judgment with the immense processing speed, endurance, and data synthesis of machines.38

However, this transition introduces severe cognitive burdens on the warfighter.35 If a single infantry unit is acting as a controller for up to 250 drones—a scenario explored in DARPA’s OFFSET program—the human operator cannot possibly review the sensor feed of every individual drone prior to a lethal engagement.37

| Control Paradigm | Human Role | Machine Role | Scalability | LOAC Liability Risk |

| Human In the Loop (HITL) | Manually selects target, guides system, and directly authorizes weapon release. | Navigation, stabilization, sensor tracking, basic flight controls. | Very Low (1:1 ratio limits mass deployment) | Low (Human assumes full judgment and compliance burden). |

| Human On the Loop (HOTL) | Monitors system activities; retains active veto power to abort engagements. | Identifies targets, computes firing solutions, requests authorization to engage. | Medium (1:Many ratio, enables limited swarming) | Moderate (Risk of automation bias / cognitive overload leading to blind trust). |

| Human Out of the Loop (HOOTL) | Defines broad mission parameters, geographic bounds, and ROE prior to launch. | Fully autonomous target selection, dynamic maneuvering, and engagement within defined bounds. | High (Enables massive decentralized swarm operations) | High (Algorithm assumes the entire compliance burden in unpredictable environments). |

Table 2: The spectrum of human control in autonomous weapon systems, illustrating the inverse relationship between scalability and direct legal liability.

To mitigate cognitive overload, the HMT interface must act as an intelligent filter. It must synthesize the chaotic battlespace, presenting the human operator only with critical anomalies, strategic deviations, or specific requests for engagement authorization that require human contextual judgment.39

8.2. Swarm Dynamics, Emergent Behavior, and Logistics

Autonomous swarms present a unique operational and legal challenge. In a true swarm, individual drones are not necessarily programmed with the entire mission plan, nor are they centrally controlled by a single node. Instead, they operate on decentralized algorithms—similar to flocking behavior in nature—sharing data, adapting to interference independently, and collectively solving problems.40 Programs like SATURN aim to provide this resilient, decentralized behavior to heterogeneous swarms of unlimited size.39 The 2016 Perdix drone test, launching over 100 micro-drones from F/A-18s, successfully demonstrated collective decision-making and self-healing swarm behavior.40

While highly resilient to communications jamming, decentralized swarms operate through emergent behavior—complex actions that arise from the interaction of the swarm members rather than explicit, top-down programming. Overseeing emergent behavior requires command structures that prioritize strict boundary setting (e.g., absolute geofencing, maximum loiter times, strict target-type restrictions) rather than micro-management, ensuring that the swarm’s collective, emergent action never violates the overarching ROE.41

Furthermore, these autonomous capabilities are not limited to kinetic strikes. Drone technology is increasingly viewed as a solution for sustainment and logistics operations.42 Autonomous swarms can provide continuous monitoring and security for supply convoys and logistics nodes in large-scale combat operations, protecting vulnerable sustainment forces without requiring dedicated, manned security details.42

9. Crisis Stability and the Risk of Unintended Escalation

Perhaps the most severe strategic risk associated with the proliferation of autonomous weapons is their potential to radically undermine crisis stability.43 The integration of AI into military platforms inherently compresses the timeline of decision-making. Operations transition from “human speed”—which allows for pauses, diplomatic intervention, and the assessment of strategic intent—to “machine speed”.44

9.1. Algorithmic Flash Wars

If U.S. autonomous swarms encounter adversarial autonomous systems in a contested zone, the interactions and calculations occur in milliseconds.11 Without human pauses to assess intent or de-escalate, there is a profound risk of miscalculation. A routine defensive maneuver by a U.S. drone, executed autonomously to avoid a collision, might be mathematically interpreted by an adversary’s AI as an aggressive, pre-launch attack profile.11

This misperception could trigger an automated counter-attack, generating an immediate, uncontrolled escalation spiral—a phenomenon termed a “flash war”—before human commanders in either nation are even aware an engagement has occurred.47 The National Security Commission on AI (NSCAI) explicitly warned that AI-enabled systems reduce the time and space available for de-escalatory measures.11

9.2. Escalation and Strategic Deterrence

The rapid deployment of autonomous capabilities can also inadvertently threaten a competitor’s strategic deterrents, potentially lowering the threshold for the use of weapons of mass destruction (WMD). If an adversary perceives that U.S. autonomous swarms possess the surveillance density and autonomy to locate, track, and strike their second-strike nuclear assets, they may adopt a destabilizing “use it or lose it” posture during a conventional crisis.45

To mitigate these severe risks, the DoD must actively consider the implementation of automated “de-escalation routines” within its algorithms. More importantly, the U.S. must support international confidence-building measures (CBMs).43 Unilateral declarations or bilateral agreements to maintain positive human control over nuclear launch decisions, or establishing technical protocols for autonomous systems to broadcast benign intent in peacetime scenarios, are necessary steps to preserve strategic stability.43

10. Required Oversight and Policy Adaptations

Hardware development will consistently outpace the evolution of doctrinal and ethical frameworks unless DoD leadership implements rigid, proactive oversight structures. DoDD 3000.09 provides a foundational starting point, but it requires aggressive enforcement and expansion to address the nuanced realities of algorithmic warfare.13

10.1. Strengthening Senior Review Mechanisms

Currently, fully autonomous weapon systems must undergo a rigorous Senior Review by the Under Secretary of Defense for Policy, the Under Secretary for Research and Engineering, and the Vice Chairman of the Joint Chiefs of Staff prior to entering formal development and before fielding.12 Leadership must ensure these reviews are strictly enforced and are never treated as mere bureaucratic formalities to be waived for the sake of acquisition speed.50

The newly established Autonomous Weapon Systems Working Group must be empowered with the technical expertise to halt programs that fail to prove algorithmic interpretability.12 If an AI model operates as an impenetrable “black box” where developers and commanders cannot adequately explain why the algorithm selected a specific target, it cannot be legally certified for combat operations, regardless of its statistical success rate in simulation.14

10.2. Closing Policy Loopholes and Standardizing Frameworks

The 2023 iteration of DoDD 3000.09 has faced legitimate critique for applying exclusively to the Department of Defense. This leaves a concerning policy vacuum for autonomous systems utilized by intelligence agencies, such as the CIA, which have historically played an active role in the use of armed drones outside traditional armed conflict environments.21 Leadership should advocate for a comprehensive, government-wide policy that standardizes the ethical development and use of lethal autonomy across all federal agencies.

Furthermore, leadership must mandate the use of Algorithmic Impact Assessments prior to deployment.27 These proactive assessments evaluate the potential societal harms, escalation risks, and LOAC vulnerabilities inherent in a system’s training data before it reaches the battlefield. Finally, legal accountability frameworks must be clarified in doctrine. If an autonomous system commits a LOAC violation due to unforeseen model drift, flawed synthetic training data, or unpredictable emergent swarm behavior, the chain of legal accountability—from the battlefield commander who authorized the deployment to the acquisition officer and the engineer who trained the data—must be unambiguously established to uphold the integrity of international law.14

11. Conclusion

The pursuit of algorithmic warfare and the deployment of autonomous swarms offer undeniable tactical advantages, providing the U.S. military with essential mass, speed, and operational resilience in heavily contested, A2/AD environments. However, the true barrier to operationalizing these capabilities is not the industrial base’s ability to produce hardware; it is the immense systemic challenge of integrating the Law of Armed Conflict and the Rules of Engagement into software code.

To legally and safely enable warfighters to employ these advanced systems, DoD leadership must definitively shift from a platform-centric acquisition mindset to a software-assurance mindset. This transformation requires embedding legal counsel into the foundational stages of algorithmic design, abandoning outdated static testing methodologies in favor of a continuous evaluation continuum, and enforcing strict, logic-based constraints on probabilistic machine learning models. Without these systemic policy adaptations, the aggressive timeline of fielding autonomous systems risks not only widespread legal violations and ethical failures but the severe, uncontrollable destabilization of global crisis management. Accountability, legal adherence, and ethical principles must be engineered into the code just as deliberately as the physical payload is integrated into the airframe.

Please share the link on Facebook, Forums, with colleagues, etc. Your support is much appreciated and if you have any feedback, please email us in**@*********ps.com. If you’d like to request a report or order a reprint, please click here for the corresponding page to open in new tab.

Sources Used

- Deputy Secretary of Defense Kathleen Hicks’ Remarks: “Unpacking the Replicator Initiative”, accessed April 24, 2026, https://www.war.gov/News/Speeches/Speech/Article/3517213/deputy-secretary-of-defense-kathleen-hicks-remarks-unpacking-the-replicator-ini/

- The Autonomous Arsenal in Defense of Taiwan: Technology, Law, and Policy of the Replicator Initiative | The Belfer Center for Science and International Affairs, accessed April 24, 2026, https://www.belfercenter.org/replicator-autonomous-weapons-taiwan

- Code, Command, and Conflict: Charting the Future of Military AI – Belfer Center, accessed April 24, 2026, https://www.belfercenter.org/research-analysis/code-command-and-conflict-charting-future-military-ai

- The Coming Military AI Revolution – Army University Press, accessed April 24, 2026, https://www.armyupress.army.mil/Journals/Military-Review/English-Edition-Archives/May-June-2024/MJ-24-Glonek/

- Rules of Engagement as a Regulatory Framework for Military Artificial Intelligence, accessed April 24, 2026, https://lieber.westpoint.edu/rules-engagement-regulatory-framework-military-artificial-intelligence/

- Full article: Encoding the Enemy: The Politics Within and Around Ethical Algorithmic War, accessed April 24, 2026, https://www.tandfonline.com/doi/full/10.1080/13600826.2023.2234395

- Ethical, Legal and Operational Challenges of AI-Driven Warfare and Autonomous Systems > Air University (AU) > Article Display, accessed April 24, 2026, https://www.airuniversity.af.edu/Office-of-Sponsored-Programs/Research/Article-Display/Article/4459074/ethical-legal-and-operational-challenges-of-ai-driven-warfare-and-autonomous-sy/

- Human-Guided Learning for Probabilistic Logic Models – PMC – NIH, accessed April 24, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC7805928/

- LAWS AND LAWYERS: LETHAL AUTONOMUS WEAPONS BRING …, accessed April 24, 2026, https://tjaglcs.army.mil/Portals/0/Publications/Military%20Law%20Review/2020%20(Vol%20228)/Vol.%20228%20-%20Issue%201/2020-Issue-1-Laws%20and%20Lawyers.pdf?ver=KtuYW-rETtMhd_XYtzwmpA%3D%3D

- Developmental Test and Evaluation of Autonomous … – USD(R&E), accessed April 24, 2026, https://www.cto.mil/wp-content/uploads/2025/10/DTE-of-AS-GB.pdf

- The Risks – Autonomous Weapons Systems, accessed April 24, 2026, https://autonomousweapons.org/the-risks/

- DoD Directive 3000.09, “Autonomy in Weapon Systems,” January 25, 2023 – Executive Services Directorate, accessed April 24, 2026, https://www.esd.whs.mil/portals/54/documents/dd/issuances/dodd/300009p.pdf

- Pentagon updates guidance for development, fielding and employment of autonomous weapon systems | DefenseScoop, accessed April 24, 2026, https://defensescoop.com/2023/01/25/pentagon-updates-guidance-for-development-fielding-and-employment-of-autonomous-weapon-systems/

- Legal Accountability for AI-Driven Autonomous Weapons – Lieber Institute – West Point, accessed April 24, 2026, https://lieber.westpoint.edu/legal-accountability-ai-driven-autonomous-weapons/

- Deep Dive: Pentagon’s Replicator Initiative Raises Questions | Inkstick, accessed April 24, 2026, https://inkstickmedia.com/deep-dive-pentagons-replicator-initiative-raises-questions/

- How NATO can integrate AI to prevail in future algorithmic warfare – Atlantic Council, accessed April 24, 2026, https://www.atlanticcouncil.org/in-depth-research-reports/report/how-nato-can-integrate-ai-to-prevail-in-future-algorithmic-warfare/

- January/February 2022 – Collaborative Autonomy, Swarming Advancing in Next Generation Military Drone Systems – Aviation Today, accessed April 24, 2026, https://interactive.aviationtoday.com/avionicsmagazine/january-february-2022/collaborative-autonomy-swarming-advancing-in-next-generation-military-drone-systems/

- Autonomous Weapons Systems and Proportionality: The Need for Regulation, accessed April 24, 2026, https://scholarlycommons.law.case.edu/cgi/viewcontent.cgi?article=2713&context=jil

- Train AI Models for Combat: Real-Time Object Classification – Xcelligen Inc., accessed April 24, 2026, https://www.xcelligen.com/how-ai-models-trained-for-object-classification-in-combat-scenarios/

- DOD Updates Autonomy in Weapons System Directive – Department of War, accessed April 24, 2026, https://www.war.gov/News/News-Stories/Article/Article/3278065/dod-updates-autonomy-in-weapons-system-directive/

- New US Policy on Autonomous Weapons Flawed | International Human Rights Clinic, accessed April 24, 2026, https://humanrightsclinic.law.harvard.edu/new-us-policy-on-autonomous-weapons-flawed/

- NOTEWORTHY: DoD Autonomous Weapons Policy – CNAS, accessed April 24, 2026, https://www.cnas.org/press/press-note/noteworthy-dod-autonomous-weapons-policy

- U.S. Department of Defense Responsible Artificial Intelligence Strategy and Implementation Pathway, accessed April 24, 2026, https://media.defense.gov/2024/Oct/26/2003571790/-1/-1/0/2024-06-RAI-STRATEGY-IMPLEMENTATION-PATHWAY.PDF

- A Comparative Analysis of the Definitions of Autonomous Weapons Systems – PMC, accessed April 24, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC9399191/

- XIV-MPH.pdf – Międzynarodowe Prawo Humanitarne Konfliktów Zbrojnych, accessed April 24, 2026, https://mph.amw.gdynia.pl/wp-content/uploads/2025/03/XIV-MPH.pdf

- Can Complementary Learning Methods Teach AI the Laws of War? – CIP, accessed April 24, 2026, https://internationalpolicy.org/publications/can-complementary-learning-methods-teach-ai-the-laws-of-war/

- Alignment and Safety in Large Language Models: Safety Mechanisms, Training Paradigms, and Emerging Challenges – arXiv, accessed April 24, 2026, https://arxiv.org/html/2507.19672v1

- Rules of Engagement as a Security Protocol and the Challenges posed by Autonomous Weapon Systems – European Consortium for Political Research (ECPR), accessed April 24, 2026, https://ecpr.eu/Events/Event/PaperDetails/77350

- Synthetic Data for Target Acquisition | ITEA Journal, accessed April 24, 2026, https://itea.org/journals/volume-45-4/synthetic-data-for-target-acquisition/

- GEOINT Artificial Intelligence, accessed April 24, 2026, https://www.nga.mil/news/GEOINT_Artificial_Intelligence_.html

- Integrating Artificial Intelligence and Machine Learning Technologies into Common Operating Picture and Course of Action Develop, accessed April 24, 2026, https://press.armywarcollege.edu/cgi/viewcontent.cgi?article=1976&context=monographs

- Military AI Will Mean Overhauling Test: DOD’s First AI Chief – Air & Space Forces Magazine, accessed April 24, 2026, https://www.airandspaceforces.com/military-ai-overhauling-test/

- Developmental Test & Evaluation of Artificial Intelligence Enabled Systems, DTE&A – DoW Research & Engineering, OUSW(R&E), accessed April 24, 2026, https://www.cto.mil/dtea/te_aies/

- Human Systems Integration Test and Evaluation of Artificial Intelligence-Enabled Capabilities, accessed April 24, 2026, https://www.ai.mil/Portals/137/Documents/Resources%20Page/Human%20Systems%20Integration%20Test%20and%20Evaluation%20of%20AI-Enabled%20Capabilities%20Framework.pdf

- Military Command and Control evolution in an age of human-machine teaming, accessed April 24, 2026, https://nousgroup.com/insights/military-command-and-control-evolution-in-an-age-of-human-machine-teaming

- Reimagining Military C2 in the Age of AI – Revolution, Regression, or Evolution – Special Competitive Studies Project (SCSP), accessed April 24, 2026, https://www.scsp.ai/wp-content/uploads/2024/12/DPS-Reimagining-Military-C2-in-the-Age-of-AI.pdf

- Leveraging Human–Machine Teaming – RUSI, accessed April 24, 2026, https://static.rusi.org/human-machine-teaming-sr-jan-2024.pdf

- Battlefield Applications for Human-Machine Teaming: – Atlantic Council, accessed April 24, 2026, https://www.atlanticcouncil.org/wp-content/uploads/2023/08/Battlefield-Applications-for-HMT.pdf

- Protecting Warfighters with Drone Swarms: Charles River Analytics’ Mike Farry Presents at Human-Machine Teaming Workshop, accessed April 24, 2026, https://cra.com/protecting-warfighters-with-drone-swarms-charles-river-analytics-mike-farry-presents-at-human-machine-teaming-workshop/

- HUMAN-MACHINE TEAMING FOR FUTURE GROUND FORCES – CSBA, accessed April 24, 2026, https://csbaonline.org/uploads/documents/Human_Machine_Teaming_FinalFormat.pdf

- Designing for Doctrine: Decentralized Execution in Unmanned Swarms – Air University, accessed April 24, 2026, https://www.airuniversity.af.edu/Wild-Blue-Yonder/Articles/Article-Display/Article/2703656/designing-for-doctrine-decentralized-execution-in-unmanned-swarms/

- Swarm Technology in Sustainment Operations | Article | The United States Army, accessed April 24, 2026, https://www.army.mil/article/282467/swarm_technology_in_sustainment_operations

- Artificial Intelligence and the Future of Strategic Stability – Texas National Security Review, accessed April 24, 2026, https://tnsr.org/roundtable/artificial-intelligence-and-the-future-of-strategic-stability/

- Artificial Intelligence – Lieber Institute – West Point, accessed April 24, 2026, https://lieber.westpoint.edu/articles-of-war/topics/artificial-intelligence/

- Impact of Military Artificial Intelligence on Nuclear Escalation Risk – SIPRI, accessed April 24, 2026, https://www.sipri.org/sites/default/files/2025-06/2025_6_ai_and_nuclear_risk.pdf

- Countering Swarms: Strategic Considerations and Opportunities in Drone Warfare, accessed April 24, 2026, https://ndupress.ndu.edu/Media/News/News-Article-View/Article/3197193/countering-swarms-strategic-considerations-and-opportunities-in-drone-warfare/

- The Risks of Autonomous Weapons Systems for Crisis Stability and Conflict Escalation in Future U.S.-Russia Confrontations | RAND, accessed April 24, 2026, https://www.rand.org/pubs/commentary/2020/06/the-risks-of-autonomous-weapons-systems-for-crisis.html

- Resolved: States ought to ban lethal autonomous weapons. – Debate, accessed April 24, 2026, https://debate.utah.edu/high_school_outreach/briefs/LD-20-21-Autonomous-Weapons.docx

- Autonomous Weapons Systems and the Laws of War | Arms Control Association, accessed April 24, 2026, https://www.armscontrol.org/act/2019-03/features/autonomous-weapons-systems-and-laws-war

- Review of the 2023 US Policy on Autonomy in Weapons Systems | Human Rights Watch, accessed April 24, 2026, https://www.hrw.org/news/2023/02/14/review-2023-us-policy-autonomy-weapons-systems